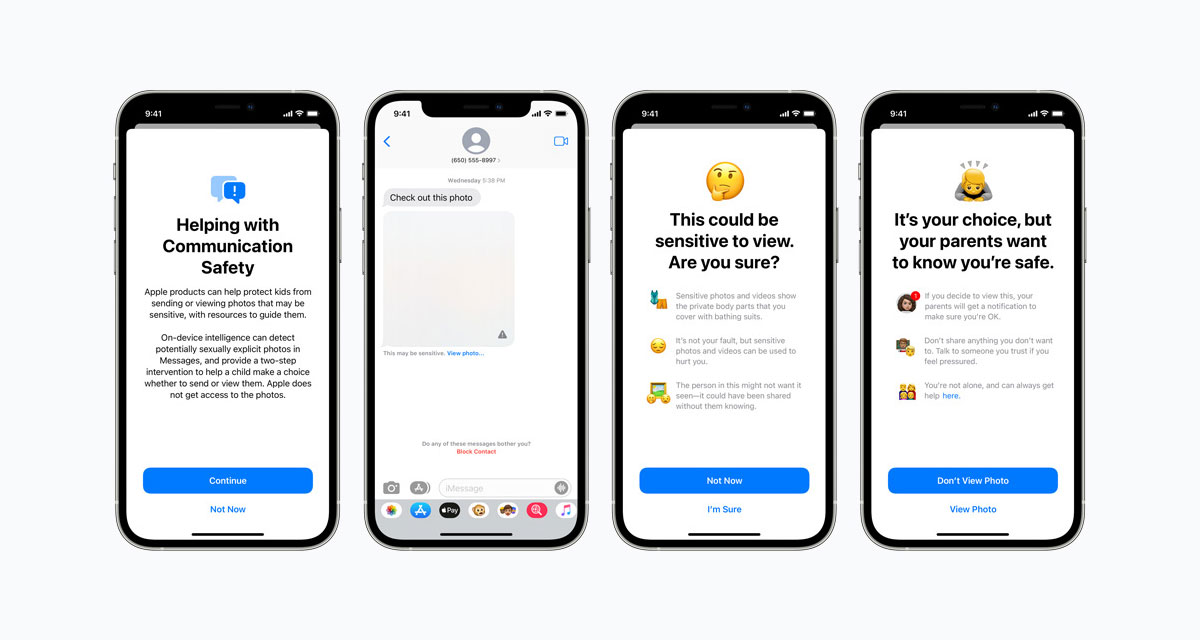

Apple has published a new FAQ that is designed to answer some of the questions people have raised since the company announced its plans to detect CSAM material in images uploaded to iCloud Photos.

“Since we announced these features, many stakeholders including privacy organizations and child safety organizations have expressed their support of this new solution, and some have reached out with questions,” the FAQ notes before going on to say “this document serves to address these questions and provide more clarity and transparency in the process.”

The full document is well worth a read and we won’t be putting all of the data here, but there are some important answers to some questions people have raised over the weekend.

They include the important question surrounding whether CSAM checking can be used to check for other kinds of data in the future.

Can the CSAM detection system in iCloud Photos be used to detect things other than CSAM?

Our process is designed to prevent that from happening. CSAM detection for iCloud Photos is built so that the system only works with CSAM image hashes provided by NCMEC and other child safety organizations. This set of image hashes is based on images acquired and validated to be CSAM by child safety organizations. There is no automated reporting to law enforcement, and Apple conducts human review before making a report to NCMEC. As a result, the system is only designed to report photos that are known CSAM in iCloud Photos. In most countries, including the United States, simply possessing these images is a crime and Apple is obligated to report any instances we learn of to the appropriate authorities.

Apple also noted that it will “refuse” any demands by governments to request non-CSAM images to be added to the hash list of media that is being checked for within iCloud Photos.

You may also like to check out:

- Download: Windows 11 Build 22000.100 ISO Beta Update Released

- How To Install Windows 11 On A Mac Using Boot Camp Today

- iOS 15 Beta Compatibility For iPhone, iPad, iPod touch Devices

- 150+ iOS 15 Hidden Features For iPhone And iPad [List]

- Download iOS 15 Beta 4 IPSW Links And Install On iPhone And iPad

- iOS 15 Beta 4 Profile File Download Without Developer Account, Here’s How

- How To Downgrade iOS 15 Beta To iOS 14.6 / 14.7 [Tutorial]

- How To Install macOS 12 Monterey Hackintosh On PC [Guide]

- iOS 15 Beta 5 Download Expected Release Date

- Download: iOS 14.7.1 IPSW Links, OTA Profile File Along With iPadOS 14.7.1 Out Now

- Jailbreak iOS 14.7.1 Using Checkra1n, Here’s How-To [Guide]

- How To Downgrade iOS 14.7.1 And iPadOS 14.7.1 [Guide]

- Convert Factory Wired Apple CarPlay To Wireless Apple CarPlay In Your Car Easily, Here’s How

- Apple Watch ECG App Hack: Enable Outside US In Unsupported Country On Series 5 & 4 Without Jailbreak

You can follow us on Twitter, or Instagram, and even like our Facebook page to keep yourself updated on all the latest from Microsoft, Google, Apple, and the Web.