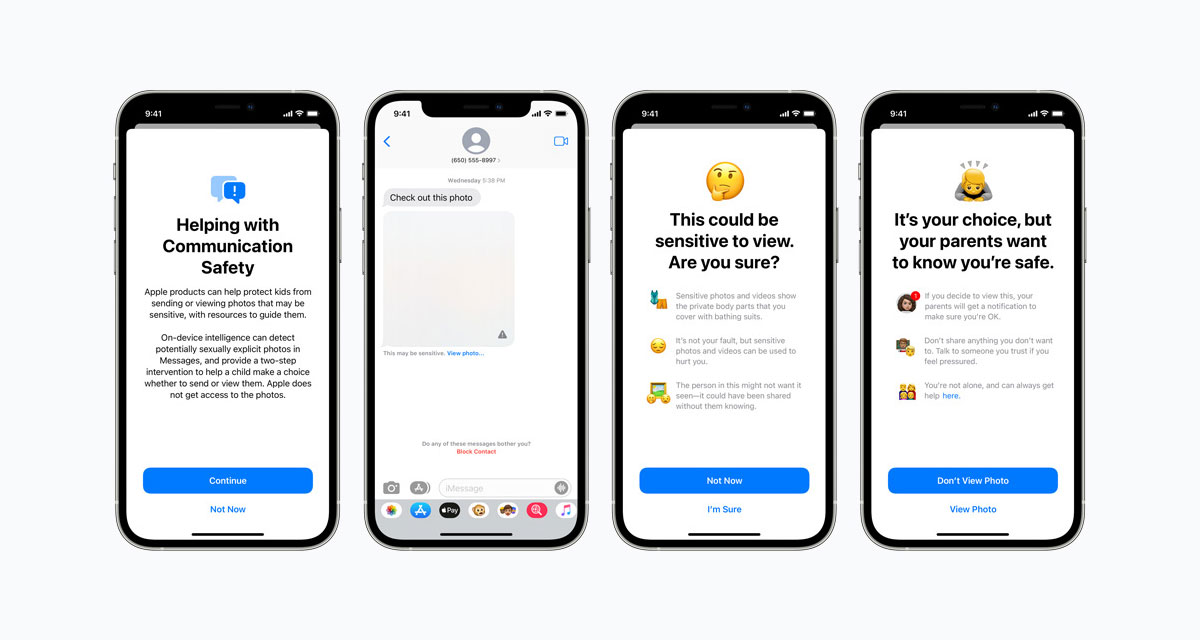

Apple has confirmed that its upcoming CSAM image scanning feature won’t work on devices that don’t have iCloud Photos enabled. In other words, devices that don’t already upload their photos to iCloud won’t be scanned to ensure photos don’t breach child safety laws.

Apple caused quite the controversy yesterday when it confirmed that future software updates will see iPhones, iPads, and Macs check photos to ensure that they don’t feature child sexual abuse material (CSAM).

But Apple has confirmed to MacRumors that the feature will only run when iCloud Photos is enabled.

CSAM image scanning is not an optional feature and it happens automatically, but Apple has confirmed to MacRumors that it cannot detect known CSAM images if the iCloud Photos feature is turned off.

Further, Apple has also confirmed that it cannot delve into iCloud backups and check for CSAM images, meaning the only time it will look at your photos is if you have a device signed into iCloud and with iCloud Photos enabled.

Whether this will simply mean that people with such images on their devices won’t enable iCloud Photos isn’t clear, but we can only hope that they don’t know about this feature and are brought to justice as a result.

You may also like to check out:

- Download: Windows 11 Build 22000.100 ISO Beta Update Released

- How To Install Windows 11 On A Mac Using Boot Camp Today

- iOS 15 Beta Compatibility For iPhone, iPad, iPod touch Devices

- 150+ iOS 15 Hidden Features For iPhone And iPad [List]

- Download iOS 15 Beta 4 IPSW Links And Install On iPhone And iPad

- iOS 15 Beta 4 Profile File Download Without Developer Account, Here’s How

- How To Downgrade iOS 15 Beta To iOS 14.6 / 14.7 [Tutorial]

- How To Install macOS 12 Monterey Hackintosh On PC [Guide]

- iOS 15 Beta 5 Download Expected Release Date

- Download: iOS 14.7.1 IPSW Links, OTA Profile File Along With iPadOS 14.7.1 Out Now

- Jailbreak iOS 14.7.1 Using Checkra1n, Here’s How-To [Guide]

- How To Downgrade iOS 14.7.1 And iPadOS 14.7.1 [Guide]

- Convert Factory Wired Apple CarPlay To Wireless Apple CarPlay In Your Car Easily, Here’s How

- Apple Watch ECG App Hack: Enable Outside US In Unsupported Country On Series 5 & 4 Without Jailbreak

You can follow us on Twitter, or Instagram, and even like our Facebook page to keep yourself updated on all the latest from Microsoft, Google, Apple, and the Web.