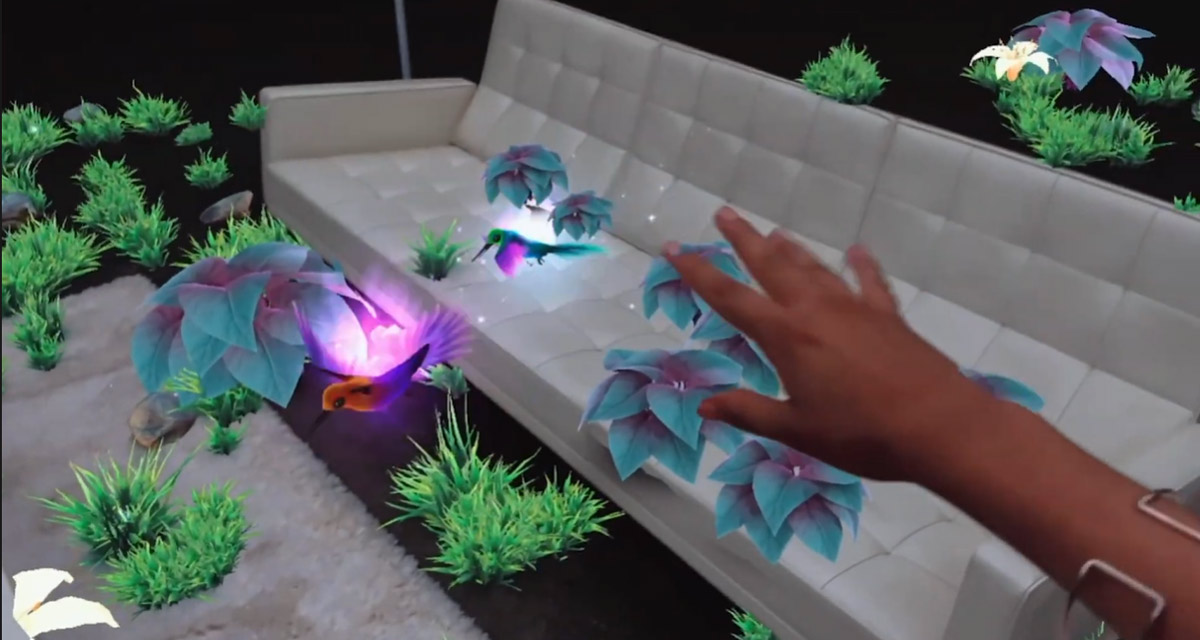

Apple recently announced the iPhone 12 Pro and iPhone 12 Pro Max with support for LiDAR, bringing the depth-sensing technology to the iPhone for the first time. It’s addition gives developers new opportunities to do all kinds of interesting things and the team at Snapchat is already getting things underway.

Users will be able to create new, LiDAR-powered lenses once their iPhone 12 Pro or iPhone 12 Pro Max arrives.

Apple’s LiDAR Scanner can measure the depth of a location and the thing inside it, including people. Snapchat says that the addition of LiDAR will allow it to create new AR objects that can then be rendered in 3D space and in real time on the new iPhones.

“The addition of the LiDAR Scanner to iPhone 12 Pro models enables a new level of creativity for augmented reality,” said Eitan Pilipski, Snap’s SVP of Camera Platform. “We’re excited to collaborate with Apple to bring this sophisticated technology to our Lens Creator community.”

Anyone who wants to take advantage of the new technology to create new Snapchat lenses can download the updated Lens Studio 3.2 direct from the Snapchat website.

Apple describes what the lIDAR Scanner does on its website. The same camera is also part of the current iPad Pro lineup, although it isn’t likely too many Snapchat users are using their tablet.

The LiDAR Scanner measures absolute depth by timing how long it takes invisible light beams to travel from the transmitter to objects, then back to the receiver. LiDAR works with the depth frameworks of iOS 14 to create a tremendous amount of high-resolution data spanning the camera’s entire field of view. And the beams pulse in nanoseconds, constantly measuring the scene and refining the depth map.

Apple’s iPhone 12 Pro is available for pre-order now, while the iPhone 12 Pro Max will go up for pre-order on November 6.

You may also like to check out:

- Download: iOS 14.2 Beta 1 IPSW Links, OTA Profile File And iPadOS 14.2 Beta 1 Released

- How To Fix Bad iOS 14 Battery Life Drain [Guide]

- Convert Factory Wired Apple CarPlay To Wireless Apple CarPlay In Your Car Easily, Here’s How

- iPhone 12 / Pro Screen Protector With Tempered Glass: Here Are The Best Ones

- Best iPhone 12, 12 Pro Case With Slim, Wallet, Ultra-Thin Design? Here Are Our Top Picks [List]

- iOS / iPadOS 14 Final Compatibility For iPhone, iPad, iPod touch Devices

- Jailbreak iOS 14.1 Using Checkra1n, Here’s How-To [Tutorial]

- Download iOS 14.1 Final IPSW Links, OTA Profile File Along With iPadOS 14.1

- Fix iOS 14 Update Requested Stuck Issue On iPhone And iPad, Here’s How

- Fix iOS 14 Estimating Time Remaining Stuck Issue, Here’s How

- Fix iOS 14 OTA Stuck On Preparing Update Issue, Here’s How

- How To Downgrade iOS 14.1 [Tutorial]

- Apple Watch ECG App Hack: Enable Outside US In Unsupported Country On Series 5 & 4 Without Jailbreak

You can follow us on Twitter, or Instagram, and even like our Facebook page to keep yourself updated on all the latest from Microsoft, Google, Apple, and the Web.