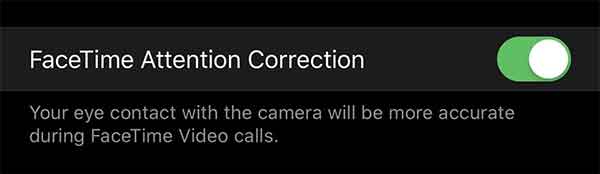

Apple released iOS 13 this week and that new update added a stealthy new feature that will make it appear like FaceTime users are looking at the caller’s eyes rather than their screen.

Normally when you place a FaceTime call you look at the other person on your phone’s display. That byproduct of that is you aren’t able to make eye contact with the other person.

The new iOS 13 FaceTime Attention Correction feature discovered by Mike Rundell, fixes that and it’s all done in software.

The new feature only currently works on the iPhone XS and iPhone XS Max although it’s possible that will change in future updates.

However it appears to work well in tests we’ve seen and while it isn’t a feature many will have been crying out for, it’s one that you’ll immediately appreciate when you start using it.

As has been noted by Dave Schukin via Twitter, it appears that Apple is using an ARKit depth map to adjust the person’s eye position on-screen. He demonstrated that by showing a horizontal line being warped when moved up and down his face during a call. It’s a pretty dramatic test and while it’s interesting to see how it works, it’s impossible to see during a normal call. It even appears to work when wearing sunglasses.

How iOS 13 FaceTime Attention Correction works: it simply uses ARKit to grab a depth map/position of your face, and adjusts the eyes accordingly.

Notice the warping of the line across both the eyes and nose. pic.twitter.com/U7PMa4oNGN

— Dave Schukin 🇺🇦 (@schukin) July 3, 2019

You can take this for a spin if you’re using the current iOS 13 beta 3 release. The final public-facing update is expected to arrive later this year, likely in September. It is of course possible the feature will change or be removed entirely by then, but hopefully that isn’t the case.

You may also like to check out:

- Download iOS 13 Beta 3 IPSW Links, OTA Profile Update Along With Of iPadOS 13 Beta 3

- Download iOS 13 Beta 3 Profile Without UDID / Developer Account, Here’s How

- Install WhatsApp Web On iPad Thanks To iOS 13 And iPadOS 13

- Download iOS 13 Public Beta And Install The Right Way, Here’s How

- iOS 13 Public Beta 1 Profile Download Along With iPadOS 13, tvOS 13 And macOS Catalina Released To Testers

- 100+ iOS 13 Hidden Features For iPhone And iPad [Running List]

- How To Downgrade iOS 13 / iPadOS 13 Beta To iOS 12.3.1 / 12.4

- iOS 13, iPadOS Compatibility For iPhone, iPad, iPod touch Devices

- Download iOS 13 Beta 1 IPSW Links & Install On iPhone XS Max, X, XR, 8, 7, Plus, 6s, iPad, iPod [Tutorial]

You can follow us on Twitter, or Instagram, and even like our Facebook page to keep yourself updated on all the latest from Microsoft, Google, Apple, and the Web.